This might be something good to discuss when covering chapters on Public Opinion and the Media.

Link to article

Link to US Civitas Facebook Discussion Thread

I think this could be useful for generating discussion about the freedom of expression. Do we really have a right to say whatever mean and hateful things we want to others? If, even as he seems to admit, we don’t have a moral right to do so, then why should we have a constitutional right to do so? Should we amend the Constitution to allow for restrictions on at least some kinds of hateful and hurtful speech? As noted in Chapter 6 of my textbook, this is a questions that divides liberals. The ACLU-wing says (along with libertarians and conservatives) that the only acceptable response to speech we dislike is to counter it with more free speech. Restricting speech we dislike, simply because we dislike it (or find it hurtful or hateful), is unacceptable. The other wing accepts the international human rights standard, which says freedom of speech must be balanced with respect for human dignity. Speech that is degrading is unworthy of legal protection. (One suspects that MLK, Jr. might have agreed with this, by the way.)

Link to US Civitas Facebook Discussion Thread

I think the entire letter would be useful when teaching the Presidency (e.g., the awesome responsibility of the office and the difference between the office and the person of the President), but I especially like the third point made by President Obama as a way to talk about fundamental principles of American government. And notice, also, the purposes of government Obama seems to assume he and Trump share in the first paragraph: “prosperity and security” are closely related to happiness/welfare and the securing of rights.

‘Read the Inauguration Day letter Obama left for Trump‘-CNN

Link to US Civitas Facebook Discussion Thread

Here is non-partisan issue we can encourage our students to take action on. Congress is currently not fulfilling its constitutional duty to prepare for the 2020 census.

BTW, this blog, which is based on Cynthia and Sanford Levinson’s book “Fault Lines in the Constitution,” is a wonderful resource for anyone teaching the Constitution. Sanford wrote a book entitled Our Undemocratic Constitution, and is a leading proponent of the idea that much of our political dysfunction has its roots in design flaws in the Constitution. Cynthia is an education scholar focused on civic education. The books (Our Undemocratic Constitution and Fault Lines) and this blog encourage us to teach the Constitution from a critical perspective that counteracts the tendency for blind veneration in our political culture. Their approach is a stimulating way to get students thinking about the Constitution; the impact of institutional design; and the possibilities for, and obstacles to, exercising popular sovereignty.

Link to article

Link to US Civitas Facebook Discussion Thread

There’s a lot to think about here regarding the big picture on higher education and its intersection with politics. GSU is put in a favorable light. I’m trying to figure out if I agree were are doing “literally the thing America needs all of higher education to do.” But the statistics are fascinating, and important to keep in mind about our students.

Link to article

Link to US Civitas Facebook Discussion Thread

This is probably too advanced for POLS 1101, but this is a very interesting revisionist account of America’s dispersed and separated governing institutions. I think I disagree — I think federalism, separation of powers, etc. have prevented more oppression than they have enabled — but he makes an interesting point. And his list of examples of injustices perpetrated and/or permitted by American government are important to keep in mind and to be ready to cite to students. Any realistic account of American institutions in practice would of course have to acknowledge many ways the institutions have allowed (and perhaps facilitated) abuse of power. The causal (counter factual) question, however, is whether there would have been more abuse and injustice if institutions were more concentrated and/or centralized.

Link to article

Link to US Civitas Facebook Discussion Thread

This is an interesting criticism of American libertarianism, written by a libertarian, regarding its historical blind spot (at best) about the unfree condition of African Americans (both during and after the time of legal slavery). I think a lot of what Levy writes here is useful to keep in mind when teaching American government, particularly when covering concepts such as limited government, federalism, ideology, civil liberties, and civil rights.

This is an interesting criticism of American libertarianism, written by a libertarian, regarding its historical blind spot (at best) about the unfree condition of African Americans (both during and after the time of legal slavery). I think a lot of what Levy writes here is useful to keep in mind when teaching American government, particularly when covering concepts such as limited government, federalism, ideology, civil liberties, and civil rights.

‘Black Liberty Matters‘-Niskanen Center

Link to US Civitas Facebook Discussion Thread

I personally agree with this argument, but I think this is a useful for teaching even if for those who don’t agree with it. Among other things, it shows how the Declaration of Independence can serve to set the terms of debate in American politics and delineate between that which is acceptable and unacceptable. It can also provoke a discussion about what patriotism requires and whether or not it is a virtue.

Link to article

Link to US Civitas Facebook Discussion Thread

This would be great for provoking discussion of First Amendment freedom of expression. Notice he does not seem to realize that the central issue here is whether the First Amendment protects speech like Spencer’s. If it does, then according to the Supreme Court, the university must allow him to speak (subject to time, place, and manner restrictions) even if it might (due to its controversial nature) create a less than perfectly safe work environment (and even it therefore violates federal workplace safety laws) unless the speech promotes, and is likely to incite, imminent lawless action. Notice also his claim that allowing Spencer to speak is somehow ignoring the threat of ethnic cleansing Spencer poses. By the Court’s approach — and that endorsed by the ACLU — suppressing expression of the ideas is not the best way to acknowledge and confront them. Rather, it is better to allow them to be expressed so that they are out in the open and then to counteract them with other acts of free speech. I’m not saying SCOTUS / ACLU necessarily have the best approach to this, but that is nevertheless the current understanding of the 1st Amendment in the U.S., and this history professor appears completely unaware of that fact. His argument seems to be, “I’m an expert on the history of National Socialism. It’s an evil ideology. Anyone espousing it is advocating evil. Therefore, it is too dangerous to allow anyone to espouse it.” One need not be an expert on the history of National Socialism to agree with every premise in that argument. But the conclusion doesn’t follow from the premises. According to SCOTUS/ACLU, the evilness of ideas is insufficient grounds for suppressing the expression of the ideas, and such suppression is not the only or best way to confront and defeat such ideas and mitigate any threat they might pose.

‘Do We Have To Fight Nazis Again?’ Professor Says Of Spencer At UF-WUFT

Link to US Civitas Facebook Discussion Thread

This would be excellent for discussing media — especially “new” media — and democracy.

‘Hard Questions: What Effect Does Social Media Have on Democracy?‘-Facebook Newsroom

Link to US Civitas Facebook Discussion Thread

This is a wonderful analysis of the age of social media that brings together many common American Government themes.

‘It’s the (Democracy-Poisoning) Golden Age of Free Speech‘-Wired

Link to US Civitas Facebook Discussion Thread

Another insightful analysis of the current plutocratic tilt of the American regime. This would be a great counterpoint to discuss when covering the American Form of Government. It also covers themes relevant to sections on interest groups, lobbying, and the bureaucracy and Supreme Court as Countermajoritarian.

‘America Is Not a Democracy‘-The Atlantic

Link to US Civitas Facebook Discussion Thread

I wonder how many of these millennials have heard about the empirically demonstrated advantages of living under democratic, rather than authoritarian, governments? I wonder if they would still be convinced? This could be a great way to provoke discussion when teaching the American Form of Government, particularly the section on the advantages of democracy.

‘Have millennials given up on democracy?‘

Link to US Civitas Facebook Discussion Thread

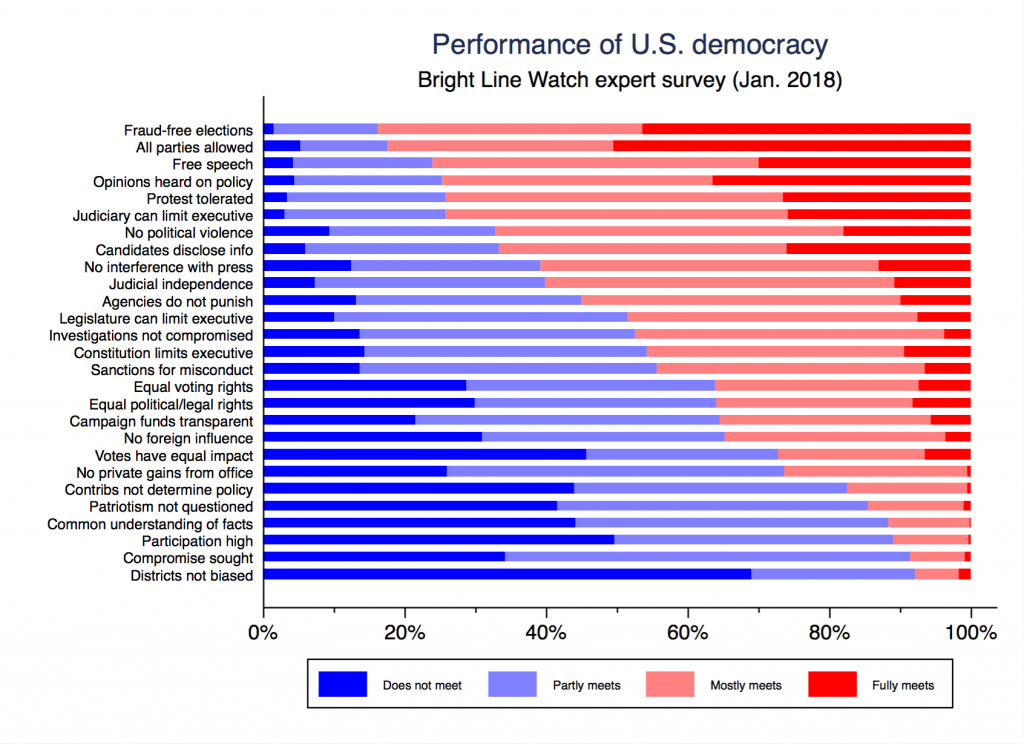

Interesting, though sobering, analysis and discussion about the state of American democracy.

‘American Democracy After Trump’s First Year‘-Bright Line Watch

Link to US Civitas Facebook Discussion Thread